Here’s what we know so far about the new “UCMA-style” API for Microsoft Teams

You won’t find it on the recently released Microsoft Teams roadmap, but Microsoft is working on a new set of developer capabilities for Microsoft Teams.

At Ignite 2017, Microsoft gave us some information about the newest API for Microsoft Temas developers. Here’s what we know so far:

What is it?

It doesn’t have a published name yet, but has been referred to as the Programmable Voice and Video Platform. I’m going to shorten that to PvvPÂ for now. Maybe I can make #PvvP a thing…

Think of some of the things you can do today in UCMA, around controlling the flow of video and voice. Things like handling calls and meeting flows, or other enterprise scenarios such as IVRs, call recording, call routing etc. Right now there’s no support for any manipulation of voice or video in Microsoft Teams. PvvP will address that, bringing “programmable voice and video workloads” to developers, but also adding intelligence and greater capabilities into those interactions.

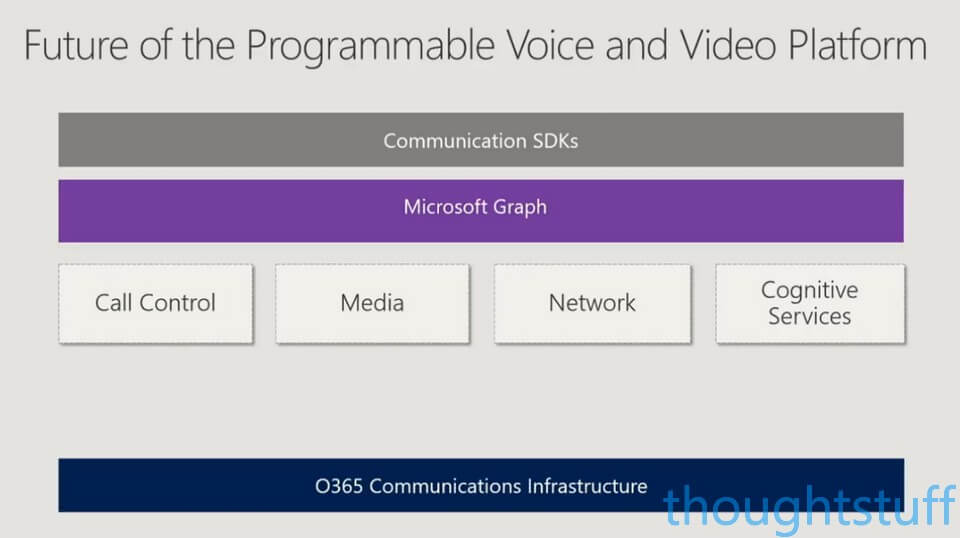

There are 4 key pillars which make up the planned offering:

- Call Control. Similar to UCMA capabilities, this covers routing scenarios, IVR flow, transfers etc.

- Media. There are two types of media capabilities planned (see below) for different types of interactions

- Network. From the start the design of PvvP will be that it can run across the different networks Microsoft has, such as Skype for Business & Microsoft Teams

- Cognitive Services. As Microsoft transition from Unified Communications to Intelligent Communications, a key part of that transition will be the ability to bring artificial intelligence services from Microsoft Cognitive Servies into communication workloads, unlocking features such as transcription and translation.

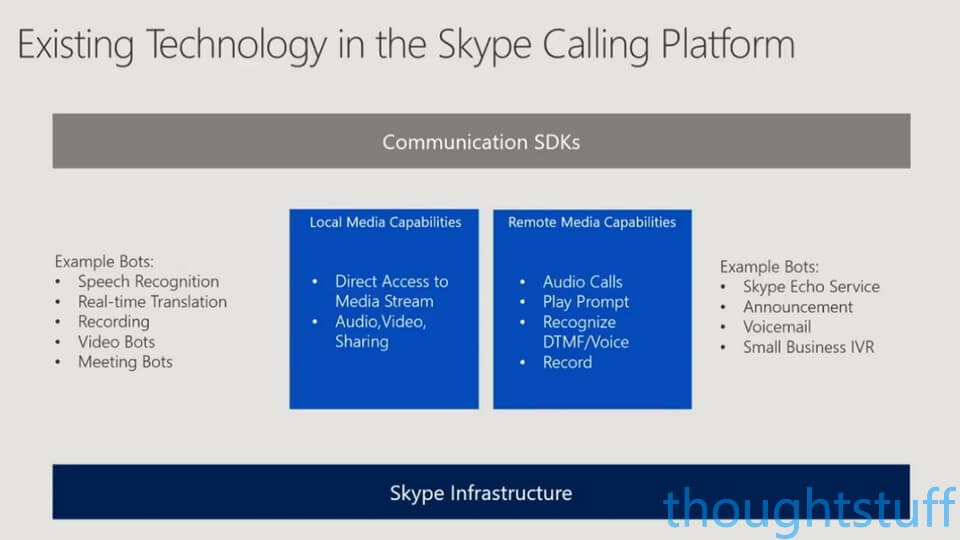

See: Media Capabilities Today in Skype Consumer

One of the interesting and surprising things we do know is that PvvP is going to be built on existing technology which is currently only available on Skype Consumer. Read on to the end of this post for some concrete actions you do TODAY to prepare!

Within the Skype ecosystem, developers can create bots that can answer audio calls, detect and transcribe voice input, and speak messages. There is also support for doing the same with real-time video calls and screen sharing. In looking at the documentation for how it’s done in Skype, we can see the difference in the two type of media capabilities. Local media capabilities allow direct interaction with the media streams for manipulation. For instance, it will be possible to play a video stream from a bot into someone’s video stream. Remote media capabilities are for a more “hands-off” approach where direct manipulation of the media isn’t necessary: for instance playing audio prompts or listening for DTMF tones.

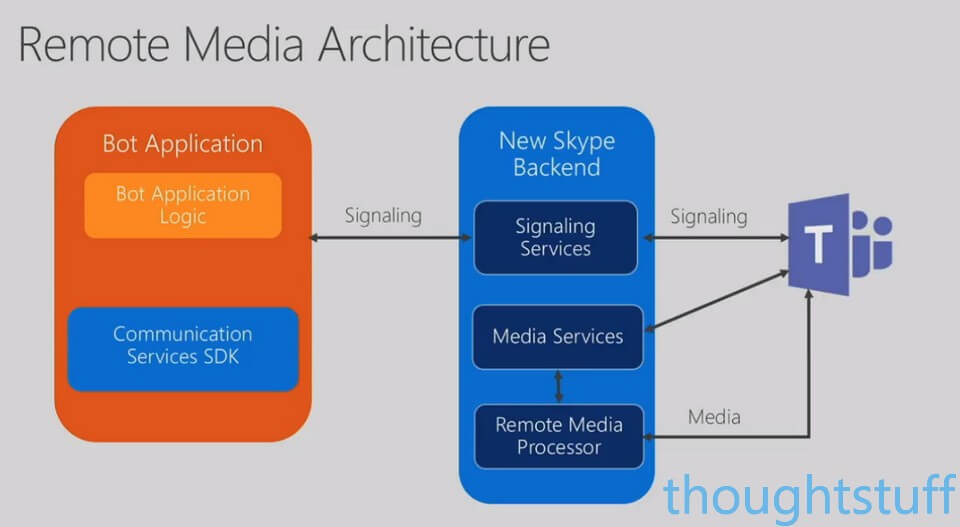

Remote Media vs Local Media

It all comes down to where the processing of the media takes place. PvvP will be built on the new Skype Backend, which comes with new signaling and media services, hosted in the Cloud. This is where Remote Media capabilities will be invoked. There’s no need for you to “get your hands dirty” and handle the media directly, the Azure-hosted services can handle it for you:

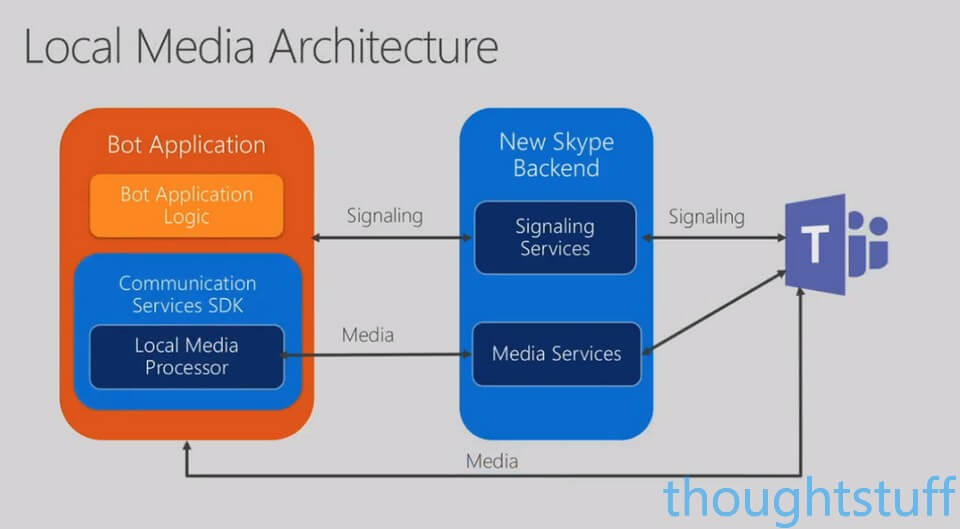

However, if you need direct access to the media in order to manipulate it in ways that aren’t supported by Remote Media, then that’s when you’ll switch to Local Media, via an SDK that runs within your bot code. You’ll still talk to the media services components of the Skype backend, but you’ll be telling it what media to send back and will be in control of what happens to the media flowing to your application:

It’s worth highlighting exactly how much control you’ll have here. You’ll be able to receive 50 frames per second of audio and 30 frames per second of video for processing. When you consider some of the amazing things you can do with Cognitive Services today (face detection, scene analysis) this capability opens up some amazing new opportunities for developers.

Microsoft Graph

A big theme for Microsoft Ignite this year was Microsoft Graph. Graph is becoming a primary source of interaction for developers to Office 365. All of the capabilities of this new API will be delivered via Graph.

Over time we’re going to see more and more services exposed through Graph, and not just for manipulating data, as is the case today, but increasingly for performing actions and interacting with live objects, such as conferences and calls.

Here’s the planned design for how the 4 key areas of the new PvvPÂ will be delivered:

When is it coming (and what can I do today)?

There have been no announcements on timelines. Interestingly, no mention of this new API appeared in the recent October 2017 Microsoft Teams roadmap. This doesn’t necessarily mean that it won’t be delivered within the timeline that the roadmap covered (up to end 2018), especially as it doesn’t fit neatly into any of the categories in that roadmap. However, good developer support for advanced capabilities such as those available in UCMA is key for many people and might be a blocker for moving workloads to Microsoft Teams today.

For developers wanting to get up to speed with PvvP before it arrives, I would encourage you to watch BRK2197 and BRK3037 from Ignite.

Also, it’s definitely worth getting up to speed with the Skype Calling Platform today, as that’s the model for the new API. Here’s what you should be reading and trying:

- For bots that can answer and process audio calls, see Conduct audio calls with Skype and GitHub samples.

- For bots that can process and manipulate video, see Real-time media calling with Skype and GitHub samples.

Hi Tom

Am I correct is assuming that the Trusted Application API is now defunct?

Also, is the SkypeWebSDK only going to be supported for on-premise S4B and not S4B Online

Could you possibly discuss the state of the Skype WebSDK & Trusted Application API on your next weekly update?

Thanks

Brian Campbell